The conversation around the latest SEO trends in 2026 has moved fast.

New AI search features, new tactics, and constant claims that search engine optimization needs a complete reset.

But when you look at how search engines are actually working, the fundamentals haven’t collapsed. The same web pages still drive search results. They’re just being selected differently.

That shift comes from retrieval-augmented generation (RAG)—the system behind AI overviews, LLM citations, and most modern AI search engines.

In this article, I’ve covered everything that’s actually changed in SEO, what’s being overstated, and what we still don’t fully understand, so you can stay ahead.

How search is changing in 2026

SEO trends in 2026 are all about AI search. But there’s a clear gap right now between how AI search is being talked about and what the data actually shows.

AI is now embedded across search results, but that doesn’t mean the ecosystem itself hasn’t split into something new.

A Surfer study of 400,000+ searches found that while AI overviews appear in 47% of queries, 52% of the sources they cite also rank in the top 10 organic results.

That tells us that AI search results are still built on top of traditional search rankings, not separate from them. Strong performance in organic search continues to translate into visibility across AI systems.

But the way that visibility is earned is starting to change.

The mechanism for maintaining that advantage has fundamentally shifted, and it starts with understanding how AI retrieval actually works.

1. RAG is quietly reshaping what it means to rank

Most AI search engines don’t generate answers from memory alone. They follow a two-step process:

- Retrieve relevant web pages

- Generate an answer using those sources

This is called retrieval-augmented generation (RAG), and it powers most modern AI search results.

The first step (retrieval) is what changes how visibility works. If your page isn’t retrieved, it won’t be used in AI-generated answers, no matter how well it ranks in traditional search results.

This shifts the baseline for search engine visibility. Because in traditional SEO, you compete for rankings. But in AI search, you first compete to be retrieved by large language models.

And retrieval doesn’t rely on keywords the same way traditional SEO does. It’s driven more by:

- Semantic relevance (how well your content matches the topic)

- Entity clarity (how clearly concepts are connected)

- Topical coverage (how completely you answer the subject)

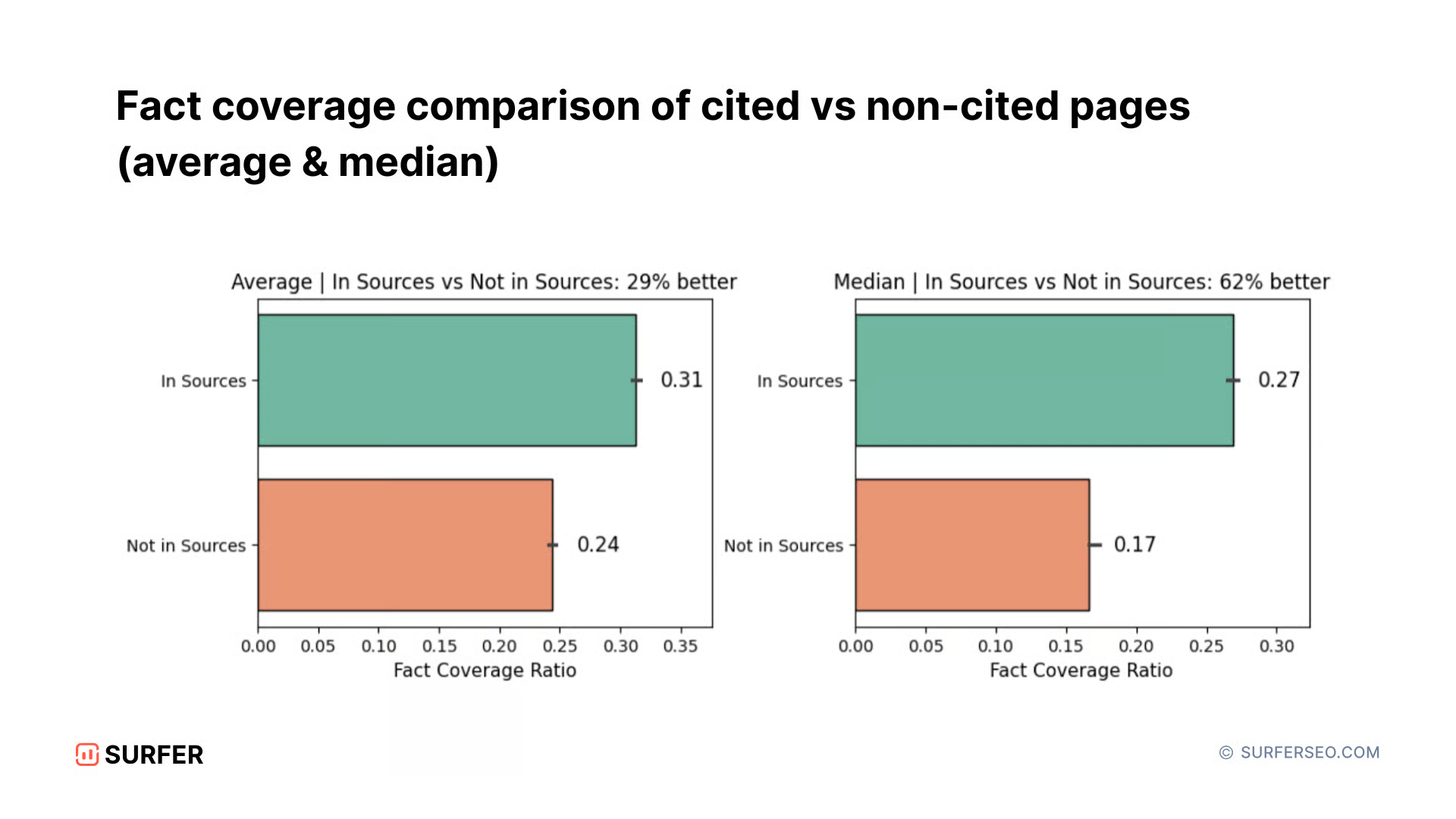

And there’s data that points in the same direction as a Surfer study of 57,000+ URLs found:

- Cited pages had 31% fact coverage, vs. 24% for non-cited pages

- Pages with 10+ key facts were cited at more than double the rate of pages with fewer than 5

The most frequently reused sources—pages cited across multiple answers—show nearly 2× higher fact coverage than pages that are never cited.

So the signal isn’t keyword density. It’s whether your content contains enough structured, extractable information for AI systems to reuse.

At the same time, we’ve seen that retrieval isn’t consistent across platforms.

Different AI systems prioritize different signals. A page retrieved by ChatGPT may not appear in Gemini or other AI search engines for the same query.

There’s no unified playbook yet, but what’s clear is the direction: visibility now depends on whether your content can be retrieved, broken down, and reused—not just ranked.

2. AI overviews are RAG in action, and we’re still learning how they work

If RAG is the system behind modern AI search, AI Overviews are where you’ll see this actually play out. They’re the most visible example of how AI systems retrieve and assemble information from multiple sources to generate answers.

And AI Overviews are already widespread as they appear in 47% of searches, with each response averaging ~157 words and citing around 5 sources. Most of them (about 78%) use lists, which makes them easier for both users and AI to scan and extract.

They also show up unevenly across search intent:

- Informational queries have a 70%+ trigger rate

- Commercial queries have a trigger rate of under 20%

So far, this all feels predictable. But what makes AI Overviews strange is how sources get selected.

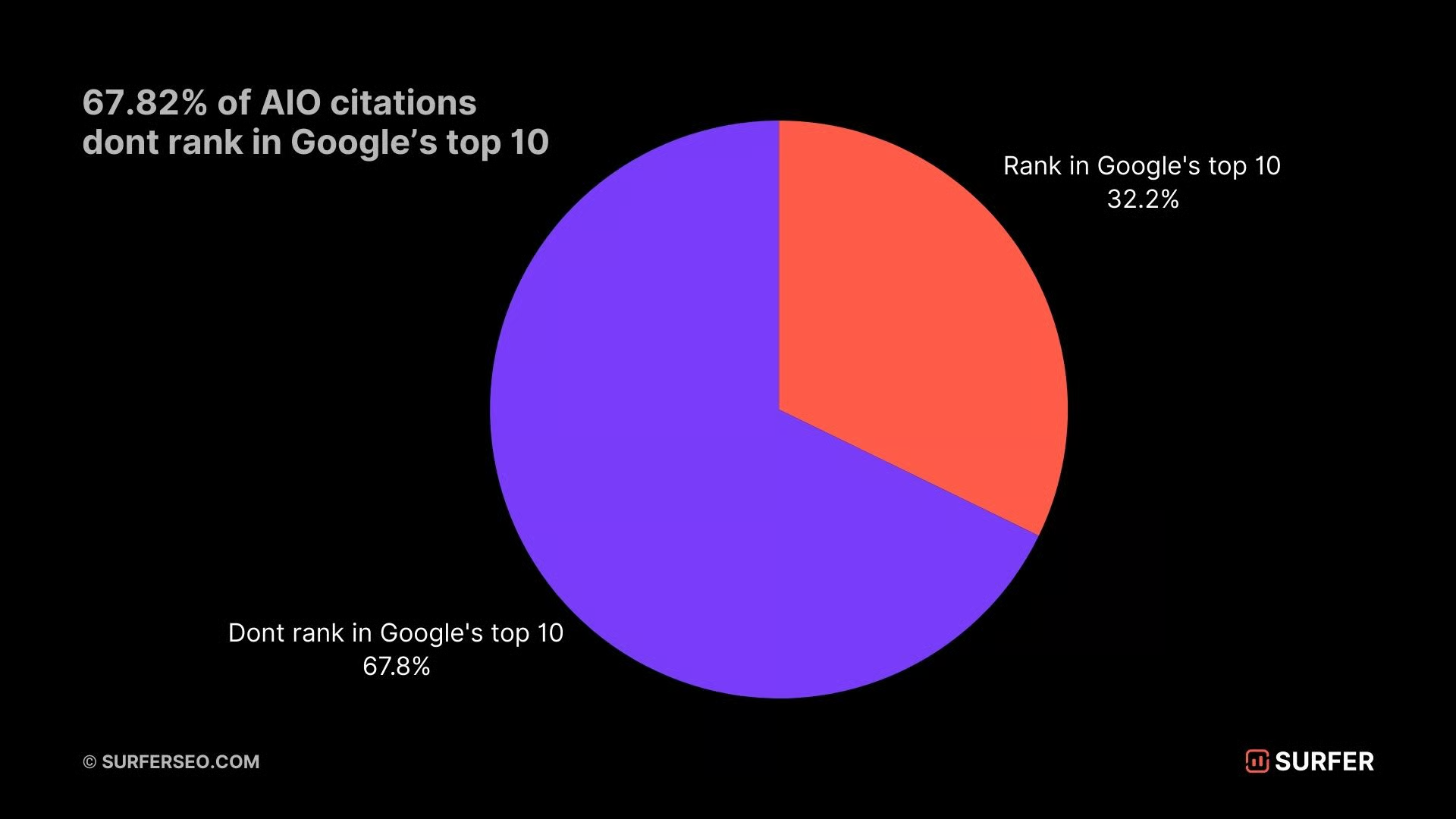

Surfer’s analysis reveals that 67% of pages cited in AI Overviews don’t rank in the top 10 for the main query, and featured snippets overlap with them in fewer than 25% of cases.

That breaks a core assumption of traditional SEO, where ranking high was the key to getting featured. Getting your page to rank high in Google still helps, but it’s no longer a reliable way to get cited in AI search answers.

It gets more counterintuitive when you look at relevance signals. One of our Surfer analysis of 30,000 pages across 1,000 queries found that:

- Early query relevance ranked 14th out of 15 factors for citation

- Pages strongly matching the query early were cited less than 30% of the time

- Early keyword placement had near-zero correlation with citation likelihood

Instead, cited pages were 2× more likely to match intent deeper in the content, not at the start. And that goes against how most content is structured today.

It also highlights how we still don’t have a clear explanation for why certain pages get cited.

What we have are directional signals, not rules.

AI overviews follow RAG, but the retrieval logic behind them is still evolving, which means a lot of current SEO strategies are based on patterns we’re observing, not systems we fully understand.

3. Server logs reveal what llms.txt really do

llms.txt was pitched as a simple fix for AI search visibility.

The idea was to add a plain text file (/llms.txt) into your root directory that acts as a curated sitemap for AI crawlers, pointing them to your most important web pages so they’re easier to retrieve and cite.

But across multiple server log studies, major AI crawlers aren’t requesting llms.txt files at all. In theory, it made sense, but the data doesn’t support it.

A CDN-level analysis of ~2 million AI-agent requests by Soniclinker found zero requests for /llms.txt from GPTBot, ClaudeBot, PerplexityBot, or similar systems

The same pattern shows up when you look at outcomes. A Search Engine Land study tracked 10 websites over 90 days after implementing llms.txt:

- 8 sites saw no measurable change

- 1 site saw a 19.7% decline

- 2 sites improved—but due to new content, PR, and technical fixes, not the file itself

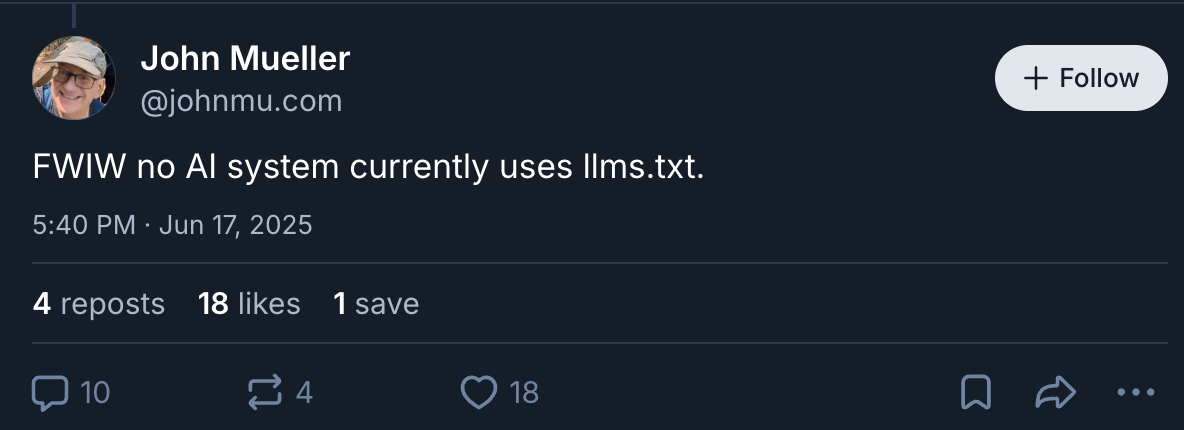

Even Google has been clear on this.

John Mueller confirmed that Google doesn’t use llms.txt for crawling or rankings. And no major AI company—Google, OpenAI, Anthropic, Meta, or Mistral—has officially adopted or enforced it.

[Source]

But there also seems to be some visibility at the indexing level.

SEO analyst Crystal Carter has also pointed out on LinkedIn that while thousands of llms.txt files are indexed, there’s no evidence they’re actually being used in AI search results.

And this is where llms.txt becomes less about the tactic itself and more about a pattern.

The SEO industry has a long history of adopting “just in case” optimizations—ideas that sound directionally right, get picked up quickly, and turn into best practices before there’s any real evidence behind them.

llms.txt fits that pattern almost perfectly.

4. Markdown isn’t always necessary for search engines

Markdown is more efficient for AI systems to process than HTML. It removes most formatting and styling, so fewer tokens are spent on code and more on actual content. That gives models more room to retrieve and use information.

Cloudflare’s benchmark shows how big that difference can be:

- Blog posts dropped from 16,180 HTML tokens to 3,150 markdown tokens (~80% reduction)

- E-commerce pages saw up to 95% fewer tokens

- Even a heading uses ~3 tokens in markdown vs. 12–15 in HTML

So the underlying idea is valid. Less formatting = more usable content for AI retrieval.

Cloudflare has already built this into its infrastructure.

Their “Markdown for Agents” feature (launched in 2026) allows AI crawlers to request markdown using standard HTTP headers (Accept: text/markdown).

The page is then converted from HTML to markdown at the edge before being served.

This isn’t an SEO tactic, but more of an infrastructural change in how content can be delivered to AI systems.

The confusion comes from how this is being applied.

There’s a big leap between “markdown is more efficient” and “you should convert your site to markdown to rank better in AI search.”

There’s no evidence supporting that leap.

John Mueller addressed this directly on Bluesky, calling the idea a “stupid” approach and pointing out that flattening pages removes useful context—like navigation, layout, and semantic HTML—that helps both users and search engines understand content.

And more importantly, there’s no public data showing that serving markdown improves AI search results, increases citations, or impacts search rankings.

So the situation is fairly straightforward:

- The engineering argument is sound (markdown is more efficient)

- The SEO impact is unproven

Right now, this is an infrastructure play, not a content strategy.

It may influence how AI search engines process content over time, but treating it as a ranking tactic is getting ahead of the evidence.

5. GEO, AEO, and the rush to name new optimization categories

Over the past 12–18 months, a new set of acronyms has entered the SEO industry:

- GEO (Generative engine optimization): Focuses on getting your content cited and reused in AI-generated answers

- AEO (Answer engine optimization): Focuses on structuring content so it can be extracted directly into answers

- SRO (Semantic retrieval optimization): Focuses on improving how content is retrieved based on meaning, entities, and context

Each of these concepts tries to define how to optimize for AI search engines, but none of them have a shared definition, no agreed-upon scope, and no consensus on how performance should be measured.

Most of these terms are being shaped by the tools and vendors promoting them.

At the same time, nearly every tool is now selling some version of an “AI visibility score.” A growing number of AI visibility tools (along with rebranded existing ones) now promise:

- AI visibility tracking

- Prompt monitoring across platforms (ChatGPT, Gemini, Perplexity, Claude)

- Citation frequency, sentiment, and competitive positioning

This follows a familiar pattern we’ve seen before.

When content optimization tools took off between 2019 and 2021, the market went through the same phase—new categories appeared quickly, differentiation was unclear, and claims moved faster than the underlying capability.

But some of this taxonomy is actually useful.

A popular framework describes AI visibility as a 3-step progression:

- SEO enables retrieval

- AEO enables extraction

- GEO enables trust and repeated reuse

That sequence reflects a real shift in how AI systems work compared to traditional search engines.

But the terminology around it is still unstable because it’s still unclear how this data is measured by current GEO and AI tools. When every vendor defines "AI visibility" differently, the metrics become impossible to compare, benchmark, or trust.

What these tools actually measure vs. what they promise

Most GEO and AEO tools claim to:

- Track brand mentions across platforms like ChatGPT, Gemini, and Perplexity

- Measure citation frequency

- Monitor sentiment and competitive positioning

- Provide optimization recommendations

Most rely on a method called prompt sampling.

They run a fixed set of queries through AI search engines and record whether your brand appears in the response. But the problem is that LLMs don’t produce consistent outputs.

Because most responses change based on:

- User context

- Session history

- Model updates

- Even timing and load conditions

So the same query can return different results within hours, making “AI visibility scores” inherently noisy.

A study from Resoneo highlights a decline in ChatGPT's sources, which naturally lowers individual brand coverage scores skewing comparisons across platforms.

In practice, this means:

- Running the same prompts on different days can produce different scores

- Different tools can report completely different results for the same brand

This doesn’t make the tools useless. Tools like Surfer’s AI Tracker are still extremely valuable for:

- Checking whether your brand appears in AI search results at all

- Tracking directional trends over time

- Understanding how competitors are positioned in answers

But the outputs need to be interpreted carefully. You should treat trends shown from these AI visibility tools with skepticism because right now, there’s no equivalent of Google Analytics for AI search.

New categories are being defined, tools are being built around them, and metrics are being productized—before the system itself is fully understood.

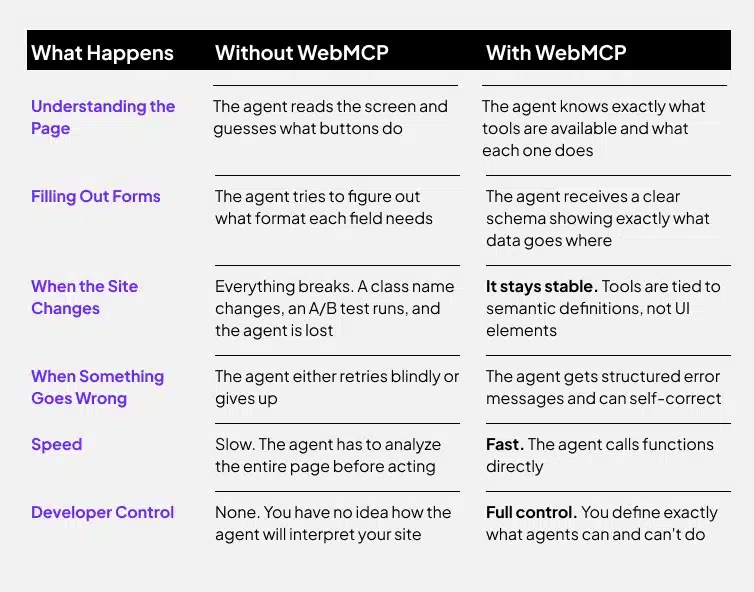

6. WebMCP and the agentic web are no longer theoretical

For a long time, the idea of AI agents interacting directly with websites felt theoretical.

WebMCP is where that starts to become real.

WebMCP (Web Model Context Protocol) lets websites expose their functionality as machine-readable tools for AI systems. Instead of taking screenshots and simulating clicks, agents can interact with a page through defined actions using the navigator.modelContext API.

So instead of just reading content, agents can actually use your site.

WebMCP is being developed with support from Google and Microsoft, and Chrome 146 shipped an early preview in February 2026, making it something developers can already test.

The main advantage is efficiency.

Today’s agent workflows rely on screenshots, which can take 2,000+ tokens to process. WebMCP replaces that with structured calls that use roughly 20–100 tokens—an ~89% reduction.

That difference matters because agent interactions are token-limited.

And this is where it connects to SEO.

If agents can book, compare, or complete actions directly on WebMCP-enabled sites, those sites become the ones that get used. Others don’t just rank lower—they get skipped.

This introduces a new layer of technical SEO that isn’t about content or links, but whether your site can be accessed and used by AI agents at all.

7. What an agent-driven web means for technical SEO

WebMCP points to a broader shift in how search works.

Traditional technical SEO is built around helping crawlers discover and index pages for humans. An agent-driven web changes that model.

Now the goal is to help AI agents complete tasks on behalf of users like booking appointments, purchasing products, comparing options, or filling out forms.

The optimization target shifts from a discoverable page to a usable service. That changes what “optimization” looks like in practice.

Being agent-readable means your site can clearly expose:

- What actions are available

- What inputs those actions require

- What outcomes they produce

This depends less on content and more on structure.

Things like:

- Clean, well-documented APIs

- Structured data and schemas

- Organized backend systems

- Clear action mapping (e.g., booking, checkout, comparison flows)

[Source]

In other words, it’s not just about being indexed, but making your site actually usable.

This shift isn’t limited to WebMCP. Other protocols are already emerging:

- Agentic Commerce Protocol (ACP): for transactions like checkout and product comparison

- Media control protocols: for subscriptions and content access

- The broader Model Context Protocol ecosystem: for defining how agents interact with different types of services

Each one points in the same direction: every industry will eventually need infrastructure that works with AI agents, not just human users.

At the same time, this is still early.

The WebMCP spec is currently a Draft Community Group Report, not a finalized W3C standard, and broader browser support isn’t expected until mid-to-late 2026.

Most sites don’t need to implement this yet, but it’s worth paying attention to.

A Semrush analysis from March 2026 compares WebMCP’s potential impact to Core Web Vitals—something that initially seemed optional but quickly became a competitive differentiator once adoption picked up.

The same pattern could play out here.

Once agents start completing real tasks across the web, the sites that support those interactions won’t just rank, but actually get used. Ultimately, this should help get website visits and clicks back on track as well.

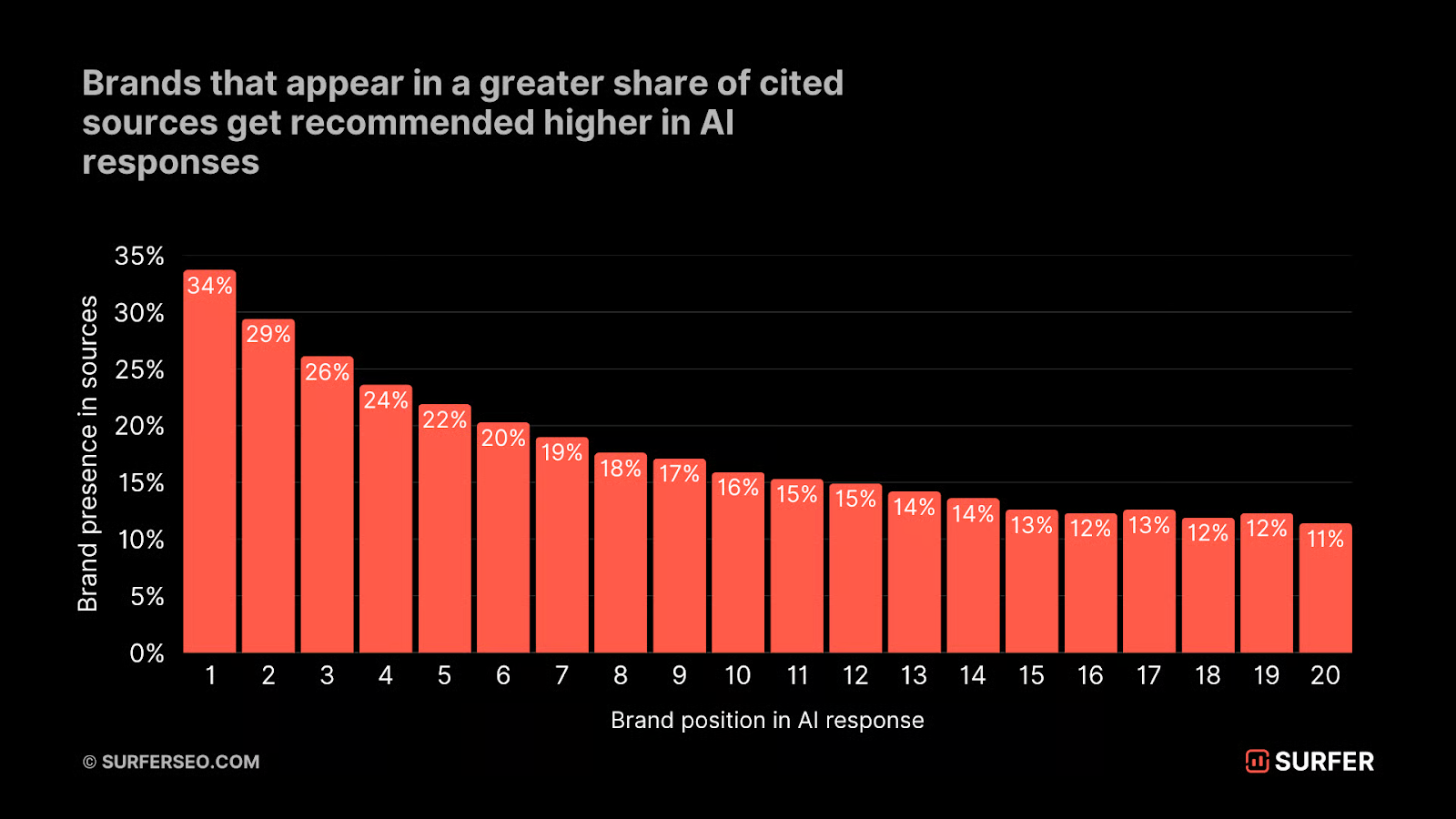

8. Third-party mentions and brand authority outweigh on-page tactics for AI search results

On-page optimization still gets your content into the system, but it’s no longer what determines whether your brand shows up in AI results pages.

Multiple studies now show that AI systems rely more on what the web says about you than what you say about yourself.

A Surfer analysis of 289,105 URLs found a ~0.41 correlation (Spearman’s rank coefficient) between how often a brand is mentioned across AI-cited sources and how strongly that brand is recommended.

This suggests that AI models are not just retrieving content—they’re validating it against broader web signals. When a brand appears consistently across trusted sources, it becomes easier for the system to surface and recommend it.

The same trend becomes even more pronounced when you look at platform-level data.

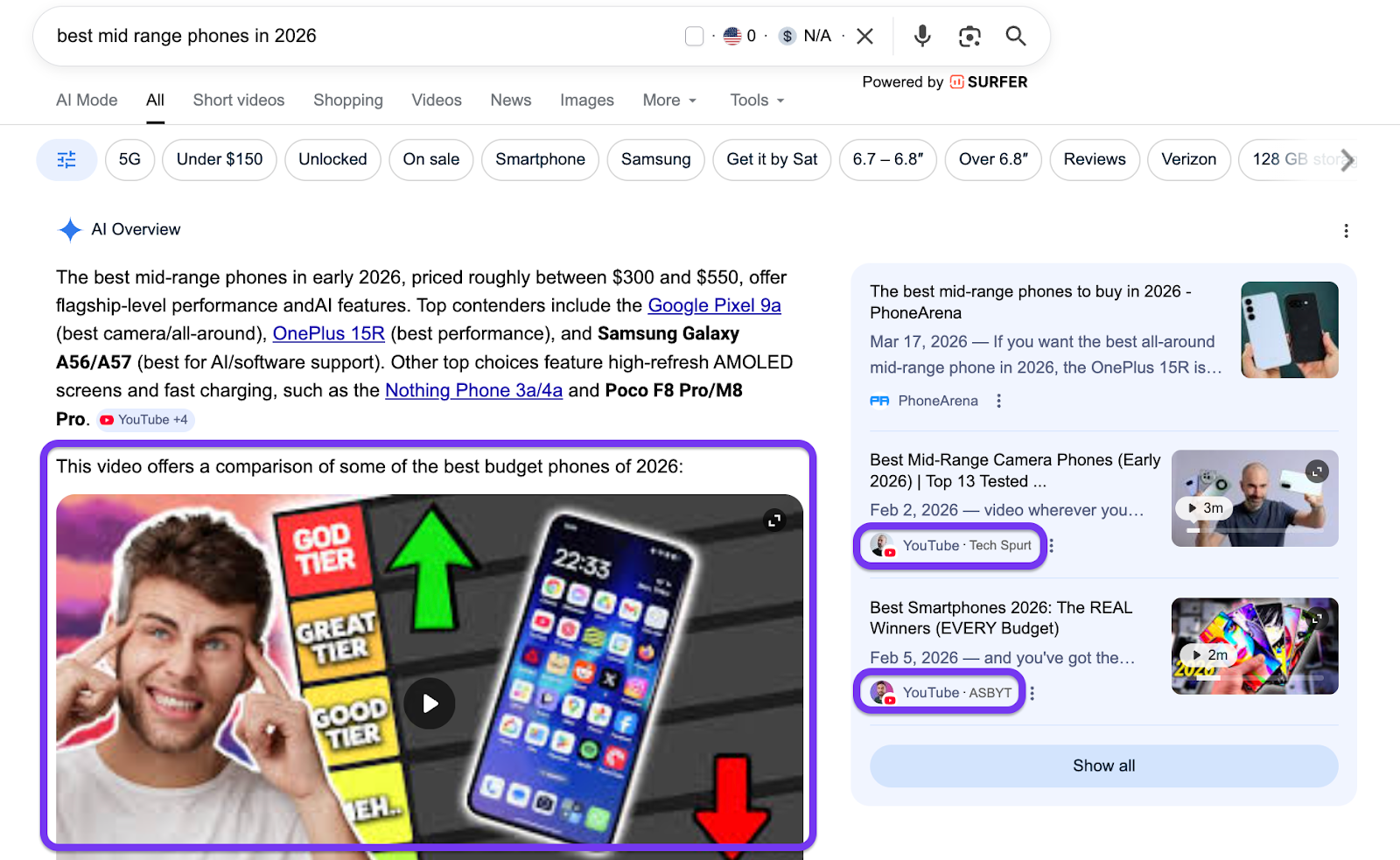

An Ahrefs study of 75,000 brands across ChatGPT, AI Mode, and AI Overviews found that the highest-correlating factor for AI visibility was not backlinks or on-page content. It was YouTube:

- YouTube video mentions have a ~0.737 correlation (strongest signal)

- Branded web mentions have a ~0.66–0.71 correlation

- News coverage, Reddit, and reviews also appear as strong AI visibility signals

At first glance, this may seem unexpected. Most AI systems are text-based, but they still rely on signals derived from platforms like YouTube, through video transcripts, descriptions, and engagement data tied to brand entities.

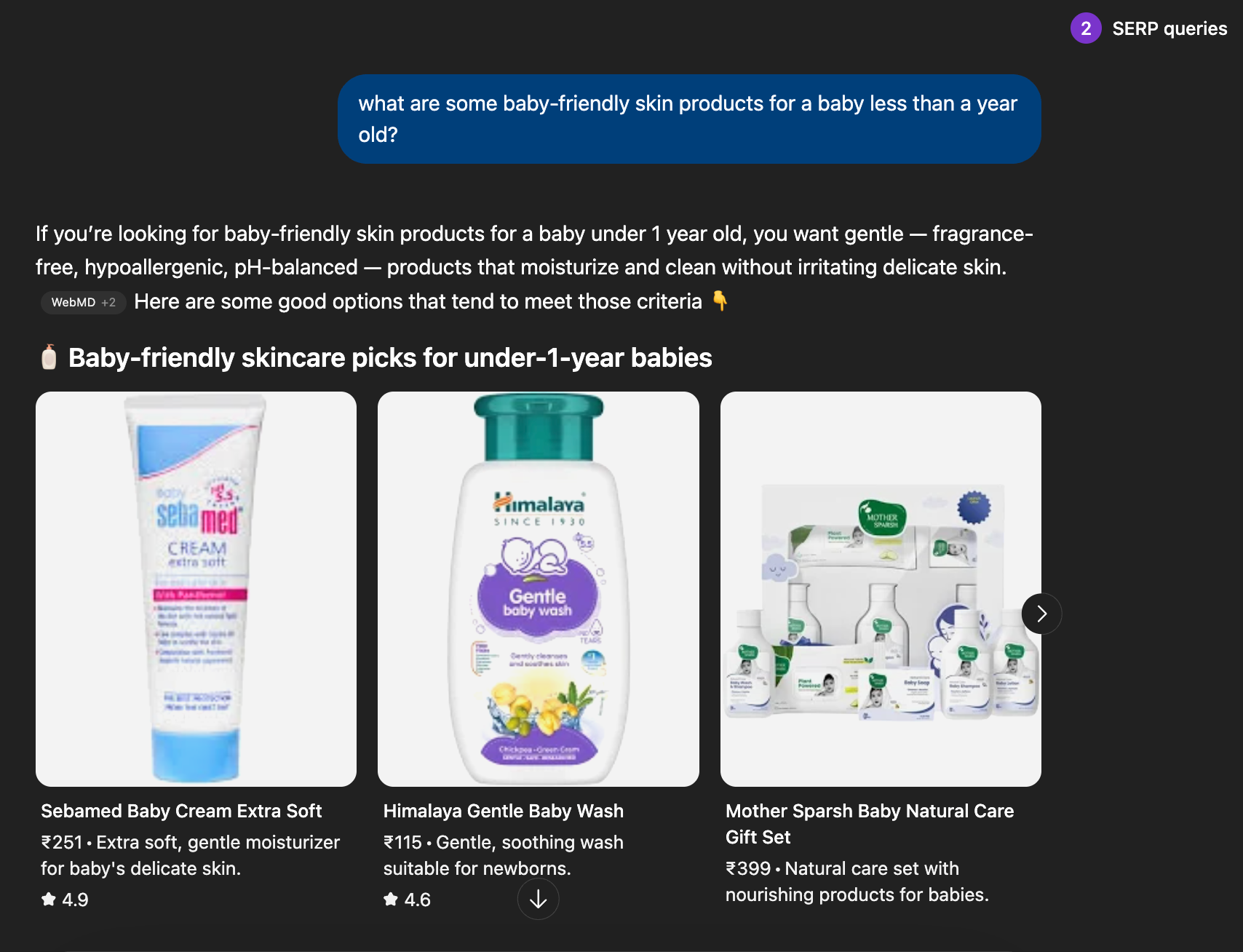

And you can see this for yourself. You’ll find a lot of Google AI Overview results actually cite YouTube videos as a source:

What this reflects is not the format of the content, but the presence of the brand across real-world conversations.

That presence has a measurable impact.

At the same time, different systems weigh these signals differently.

So there isn’t a single approach that works across all AI search engines, but the overall direction is clear.

If AI systems can’t find credible, third-party sources talking about your brand, they’re less likely to trust and reuse your content—regardless of how well it’s optimized.

This changes how SEO strategies need to be approached.

Digital PR, YouTube coverage, industry podcasts, reviews, mentions in trusted directories, and community discussions are no longer just brand-building activities. They directly influence whether your brand appears in AI search results at all.

9. Google still dominates, but users are using multiple platforms for search intent

Despite all the noise around AI search, Google still dominates.

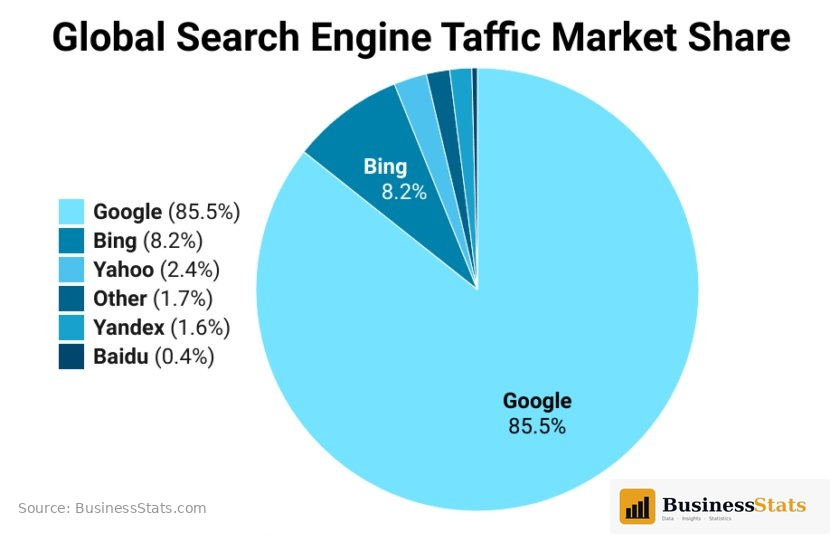

As of March 2026, Google holds roughly 90% of the global search market share—about 82% on desktop and 95% on mobile. So the idea that “Google is dying” isn’t supported by the data.

[Source]

But what has changed is how people search alongside it. According to Apptopia, the usage of AI platforms is becoming more distributed:

- ChatGPT’s share dropped from ~69–86% in early 2025 to ~45–64% by early 2026

- Google Gemini grew rapidly, roughly quadrupling its share in the same period

- Around 20% of users now use multiple AI apps, rather than relying on just one

There are also signs that users are building longer-term habits across platforms.

Data from Apptopia also shows Claude’s churn rate dropping from 55% to 36% between August 2025 and February 2026—the largest improvement among major AI apps—suggesting users are sticking with multiple tools, not defaulting to a single one.

This doesn’t mean users are leaving Google. It means the information-seeking journey is expanding.

Search behavior now typically looks more like:

- Starting with ChatGPT or other AI tools for exploration

- Validating through Google search results pages and multiple websites

- Checking user generated content like Reddit, YouTube, and reviews for social proof

This means visibility on one platform is no longer enough. Brands need to show up across multiple search environments, each with different retrieval and ranking systems.

At the same time, it’s important not to overcorrect.

ChatGPT had around 810 million monthly active users in early 2026, which is significant—but Google still processes billions of searches every day. For most sites, the majority of organic traffic still comes from traditional search engines.

So the shift isn’t about replacement, but more about expansion.

10. Brand authority matters more than ever

Google has been emphasizing E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) for years. In practice, Google uses E-E-A-T signals as social proof to understand what other people and sources say about you—not just what you claim about yourself on your own website.

It’s not enough to publish strong content on your own domain. Your brand needs to show up consistently across other websites—news reports, reviews, forums, videos, and industry content.

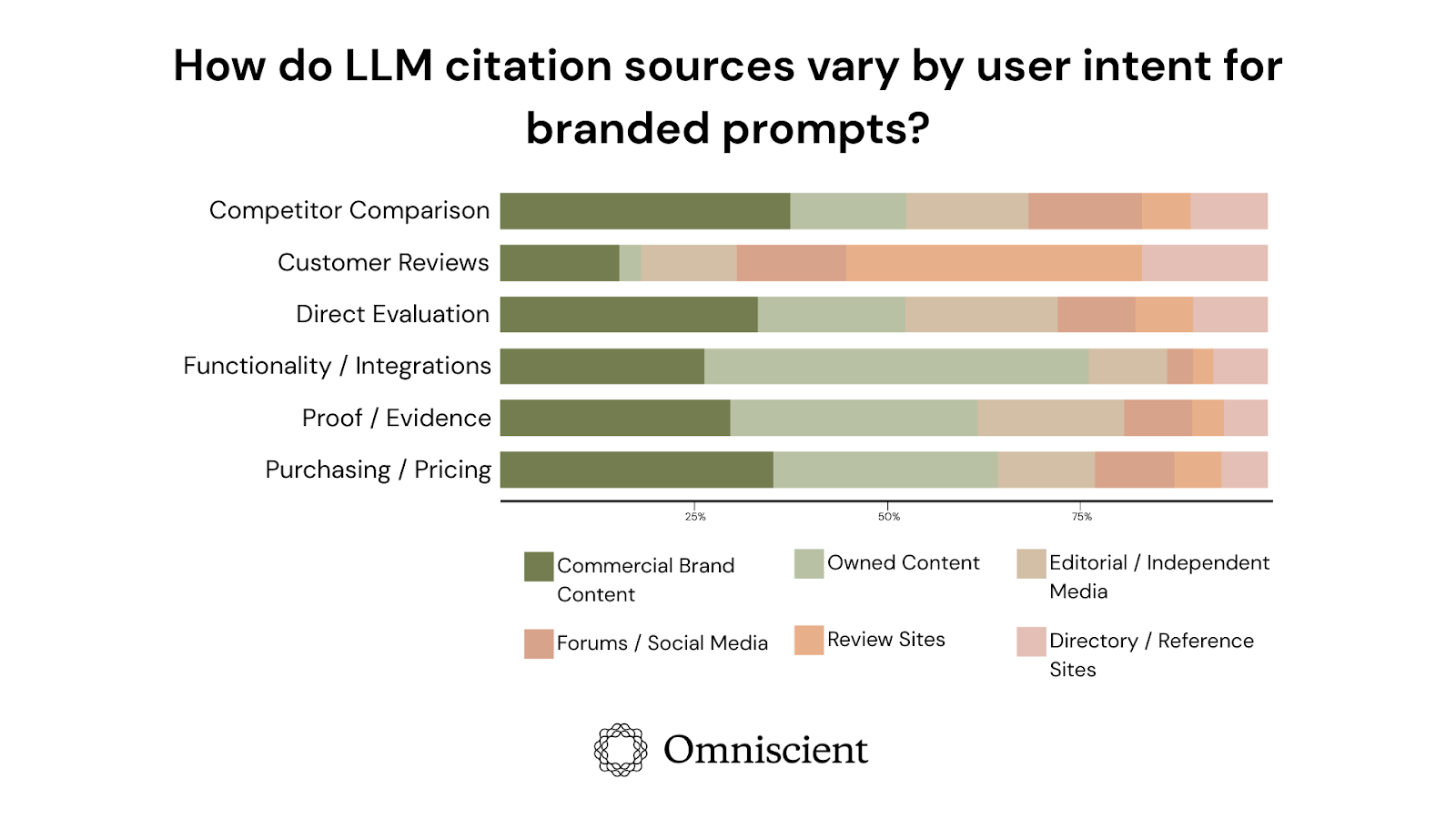

An analysis by Omniscient Digital of 23,000+ AI citations found that the breadth and quality of third-party coverage were a stronger predictor of AI visibility than domain authority or backlink profiles.

[Source]

In other words, being a strong site isn’t enough. Your brand needs to be present across multiple trusted sources.

And you can see the same pattern in how Google rankings have shifted. Past behavior has shown that Google has consistently rewarded:

- Sites with clear subject-matter expertise

- Content backed by real experience

- Brands that are referenced across the web

At the same time, AI search systems use similar signals when deciding what to include in answers.

They don’t rely on a single page. They pull from multiple sources and cross-check information. If your brand appears across those sources, it’s more likely to be included. If it doesn’t, your own content has less weight on its own.

This has a direct impact on how SEO works.

Activities like PR, podcasts, YouTube, reviews, and community discussions are no longer separate from SEO. They directly influence whether your brand is retrieved, trusted, and reused in AI-generated answers.

Because in both traditional search and AI systems, visibility increasingly depends on whether your brand exists beyond your own website.

11. Good AI content can rank in Google search results

The conversation around AI generated content has become overly simplified because it’s often framed as either “AI content is spam” or “AI content is the future.” But the reality is that it sits somewhere in between.

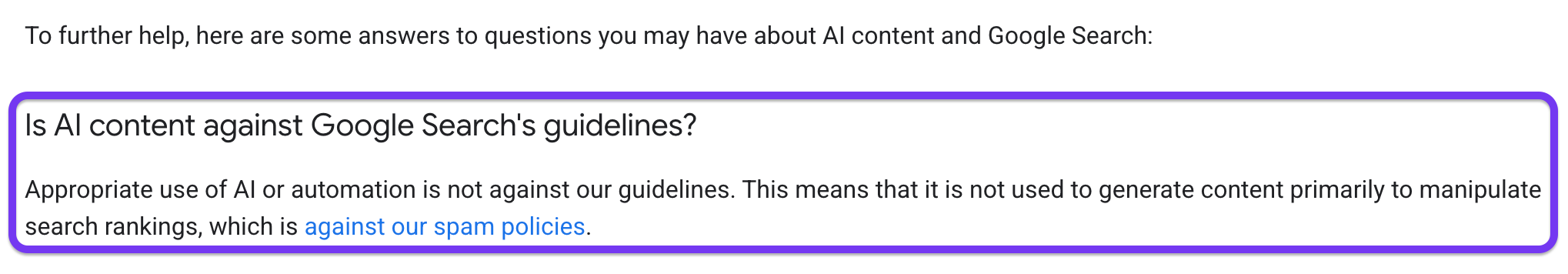

Google’s stance on AI-generated content has been consistent.

They don’t penalize content for being AI-generated. What they penalize is scaled content abuse—large volumes of low-value pages created primarily to manipulate search rankings.

Recent core updates reinforced this by drawing a clearer line between:

- Unedited, mass-produced AI output

- Content created with human expertise, oversight, and original input

This means only the former gets hit. And the data reflects that shift.

A growing share of top-ranking pages now includes some level of AI assistance. So, the tool itself isn’t the issue. What matters is whether the content adds something useful, accurate, or original.

In practice, the difference shows up in how the content is created.

- AI-assisted content that’s guided, edited, and backed by real expertise can perform well.

- Unreviewed AI output that repeats existing information tends to get ignored or demoted.

And the data reflects that shift.

A significant portion of ranking pages today includes some level of AI assistance. The tool itself isn’t the issue. What matters is whether the content demonstrates real expertise, adds new information, or simply rephrases what already exists.

This is where the nuance becomes important. AI-assisted content can perform well, especially when it’s used to:

- Structure information

- Improve clarity and coverage

- Support content creation with human input

But there’s still a clear advantage at the very top of the search results page.

Marketer Milk is a great example of this.

The site is written entirely from lived experience by a single practitioner, and it became the #1 trending SEO website tracked by Gaps.com—outranking much larger, corporate-driven blogs. The author attributes this to Google increasingly rewarding genuine E-E-A-T signals over volume-driven strategies.

At the same time, more scaled, AI-assisted content can still rank—often across the middle to lower positions on page one.

So both approaches “work,” but they lead to different outcomes:

- Human-first, experience-driven content is more likely to win top positions and remain stable

- Scaled AI-assisted content can capture broader keyword coverage, but is more vulnerable to updates

AI is a powerful tool for content creation, but it doesn’t replace human expertise or strategic thinking.

And as both search algorithms and AI systems continue to prioritize credibility, the gap between “good enough” content and genuinely valuable content is becoming more visible in rankings.

12. Query fan-outs help land AI overviews

When people search for something on Google AI mode or other search engines, AI systems don’t just look for that exact query. They expand it into multiple related sub-queries—called query fan-outs—to build a complete answer.

So instead of answering one question, they’re pulling from several related ones to create more comprehensive AI-generated summaries for your query.

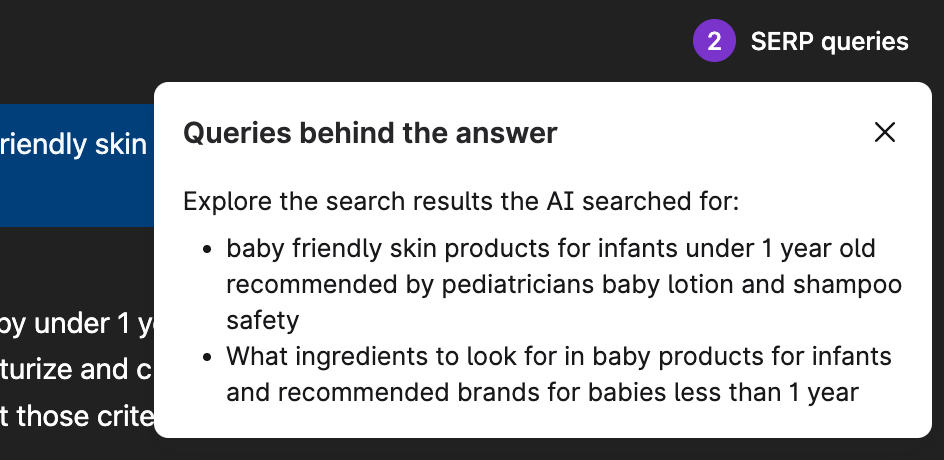

For example, here’s something I recently searched for on ChatGPT:

Using the free Keyword Surfer extension for Chrome, I can see which fan-out queries ChatGPT searched for:

ChatGPT then merges all the information it gathered from these fan-out searches into a single direct answer (with cited sources).

On average, an AI Overview generates around 6 fan-out queries, and more than 90% of searches trigger multiple variations.

This directly affects who gets cited. Search Engine Land found that pages are 161% more likely to be cited when they rank for both the main query and at least one related fan-out query.

And as coverage increases, so does visibility.

Pages ranking for 4+ related queries are cited more than 3x as often as those ranking for just one.

This also explains why many cited pages don’t rank highly for the main keyword.

A Surfer study shows that around 67% of AI Overview citations don’t come from the top 10 results for the main query. But many of those same pages rank across related queries—that’s what gets them picked up.

So the advantage isn’t coming from just one ranking page. It’s coming from topic coverage.

This means your search strategy shouldn’t just focus on a single keyword for a blog. SEO professionals and content marketing teams need to focus on:

- Answering the main query

- Covering related subtopics

- Addressing follow-up questions

And Surfer’s data reflects this clearly:

- 70%+ of cited pages rank for at least one fan-out query

- <40% rank for the main query alone

- Pages covering multiple queries exceed 60% citation rates, vs. <20% for single-query pages

In short, AI Overviews don’t reward single-keyword optimization or search volume like old-school search engine algorithms once did. They reward how well you cover the topic as a whole.

13. Search engine optimization has new metrics over old vanity goals

For years, SEO experts measured success using a familiar set of metrics like ranking for target keywords, search volume, organic traffic, and impressions.

Those key SEO metrics still matter, but they no longer tell the full story.

When 47% of searches trigger AI overviews, with a majority of them leading to zero click searches, ranking #3 in search results doesn’t carry the same weight it used to.

This shift is pushing digital marketing professionals to look at new signals like:

- AI answer inclusion rate: Does your brand appear in AI-generated answers at all?

- Citation frequency: How often you’re mentioned across queries

- Brand mention sentiment: How your brand is positioned in responses

- AI referral traffic: Visits coming from ChatGPT, Perplexity, or Gemini

- Session signals: Whether users continue searching after visiting your page

This last metric is especially important.

In Moz’s 2026 trends roundup, Veronika Höller (Head of Demand Generation at Tresorit) highlights that session behavior—specifically, whether users keep searching after landing on your page—may become a core SEO signal.

That means, if users return to search immediately, your content likely didn’t satisfy their intent.

At the same time, measuring any of this precisely is still difficult because there’s no equivalent of Google Analytics for AI search yet.

Most tools rely on prompt sampling, where queries are run periodically across platforms to check for mentions. Because AI tools generate different responses depending on context, timing, and user state, these metrics can vary significantly.

That’s why most “AI visibility scores” should be treated as directional, not an exact measure.

But new metrics aren’t the biggest shift to watch out for. We need to pay attention to what those metrics are tied to.

SEO measurement needs to connect back to actual outcomes like leads, revenue, and pipeline from your target audience—not just visibility.

Of course, this has always been true, but it becomes more important due to the unreliability of new AI metrics and how traditional metrics have become more surface-level.

Ranking, traffic, and impressions still matter. They’re just no longer enough on their own.

14. AI search is still closely tied to traditional SEO

With all the new terms, tools, and conversations around AI search, it’s easy to assume this is a completely separate discipline from traditional SEO.

But it isn’t.

Every major AI search engine still relies on existing web search infrastructure. ChatGPT pulls from Bing. Perplexity uses Google and Bing. Google’s own AI overviews are built on the same index that powers its organic results.

These systems aren’t retrieving from a separate “AI-optimized” web. They’re pulling from the same pages SEOs have been optimizing for years.

Plus, we’ve seen studies that show a lot of the sources cited by AI are already ranking in Google.

SEO in 2026 will be defined by AI-driven search, a focus on E-E-A-T, and a shift to user-generated content over synthetic AI content.

So the most reliable way to show up in AI search results is still strong SEO practices that we’ve always been following:

- Covering topics in depth

- Building real topical authority

- Creating content that can be retrieved and reused

AI has changed how content is selected and presented. But it hasn’t replaced the foundation it depends on.

So while the tactics are evolving, the core hasn’t shifted as much as it might seem.

Where SEO is actually headed in 2026

SEO trends are evolving fast, but the fundamentals haven’t shifted as much as it might seem.

Yes, AI search, RAG, and agent-driven systems are changing how content is retrieved and presented. But they still rely on the same web and the same underlying signals.

There’s also a clear gap between what’s being hyped and what’s actually supported by data.

A lot of SEO trends that are being talked about right now doesn’t hold up:

- llms.txt isn’t being used by AI crawlers

- Markdown optimization hasn’t shown any impact on citations

- Many GEO tools measure different things, so their scores aren’t comparable

What is backed by data:

- Retrieval decides visibility: If your content isn’t retrieved, it won’t be used in AI answers.

- Topical coverage beats single keywords: Pages covering related queries get cited more.

- Brand authority impacts citations: Mentions across trusted sources increase visibility.

- Agent-based systems are starting to matter: Sites that can be used by agents will have an advantage.

Ultimately, SEO trends in 2026 still reward genuinely useful content that can be easily found, is trusted beyond your own site, and covers the topic well enough to be reused.

The way content gets surfaced by the web is changing, but what actually makes it worth surfacing hasn’t.